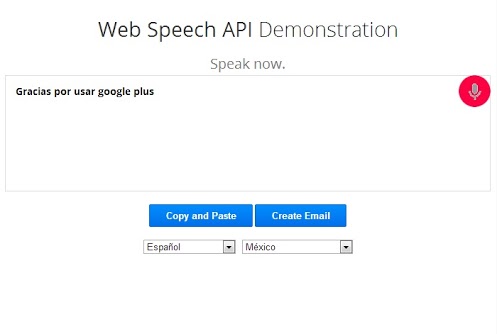

RequestAnimationFrame(loop) // we'll loop every 60th of a second to checkĪnalyser. Web speech API:- The latest version of Google chrome comes with a new support for the Web speech API which allows web app to include some voice recognition.

Let triggered = false // trigger only once per silence event Google Chrome is a browser that combines a minimal design with sophisticated technology to make the web faster, safer, and easier. then(stream => detectSilence(stream, stopSpeech, startSpeech))Ĭonst streamNode = ctx.createMediaStreamSource(stream) Ĭonst data = new Uint8Array(equencyBinCount) // will hold our data If(transcripts.some(t => t.indexOf(magic_word) > -1))) We’re going to walk through a three-step process to create a page that speaks the same text in multiple languages. Let recognition = new webkitSpeechRecognition() Chrome loads a set of voices remotely, so if your operating system does not have international voices installed, just use Chrome. initialize our SpeechRecognition object Here is how you could attach it to a SpeechRecognition: const magic_word = #YOUR_MAGIC_WORD#

You can feed it by an LocalMediaStream from MediaDevices.getUserMedia.įor more info, on below script, you can see this answer. This can be detected by the WebAudioAPI, which is not tied by this time restriction SR suffers from. If the magic_word is found, then you will be able to use the SR normally for your other tasks. This way only when there is some input, you will start the SR, looking for your magic_word. For instance: navigator.Since your problem is that you can't run the SpeechRecognition continuously for long periods of time, one way would be to start the SpeechRecognition only when you get some input in the mic. The API improves accessibility and performance, and unlocks new capabilities for web-based editors. getUserMedia and SpeechRecognition both share the (persistent) audio permission, so to detect whether audio recording is allowed, you could use getUserMedia to request the permission without activating speech recognition. The EditContext API simplifies the process of integrating a web app with advanced text input methods such as VK shape-writing, handwriting panels, speech recognition, and IME Compositions. Internally, it uses the Web Speech API of Chrome that is supported in all the newer release of Chrome browser including Google Chrome on Android. This extension page can be opened in a new window, tab or iframe.įrom this page, request access to the microphone. Web Speech API Demonstration - YouTube Add Speech Recognition to any web page with the new Web Speech API.Try it out. Dictation uses Chromes Local Storage to automatically save the transcriptions and thus youll never lose your work. If a permission error is detected ( onerror being triggered with event.error = 'not-allowed'), open an extension page ( chrome-extension:///yourpage.html).Instantiate a webkitSpeechRecognition instance and start recording.The previous explanation is about WebRTC, but it applies equally to Web Speech, and can be used as follows: The fact that it works is not necessarily an intended feature, and I have previously explained how it works and why it works in this answer to How to use webrtc insde google chrome extension?. The Web Speech API can already be used by Chrome extensions, even in the background page and extension button popups. The web speech API provides with basic tools that can be used to create interactive web apps with voice data enabled.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed